Non-contact temperature measurement for industry and R&D

Optris has been developing and manufacturing innovative infrared measurement devices for non-contact temperature measurement, including infrared cameras, and stationary industrial IR thermometers for area and point measurement, for more than 20 years.

Our comprehensive product portfolio comprises infrared measurement devices for different industrial applications as well as research & development. Along with our free thermal analysis software, our measurement devices enable constant monitoring and control of virtually every manufacturing process, and reductions in production costs through specific process optimization.

More Industries

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

INDUSTRY

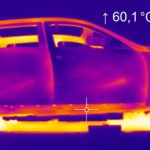

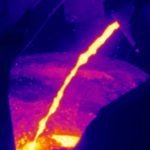

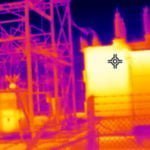

The process and product temperature is an important physical indicator for manufacturing processes and ensures a high quality level of the production line.

All Optris products apply in different areas, covering the non-contact temperature measurement. This covers the plastic industry, the food industry as well as the solar industry and life science.

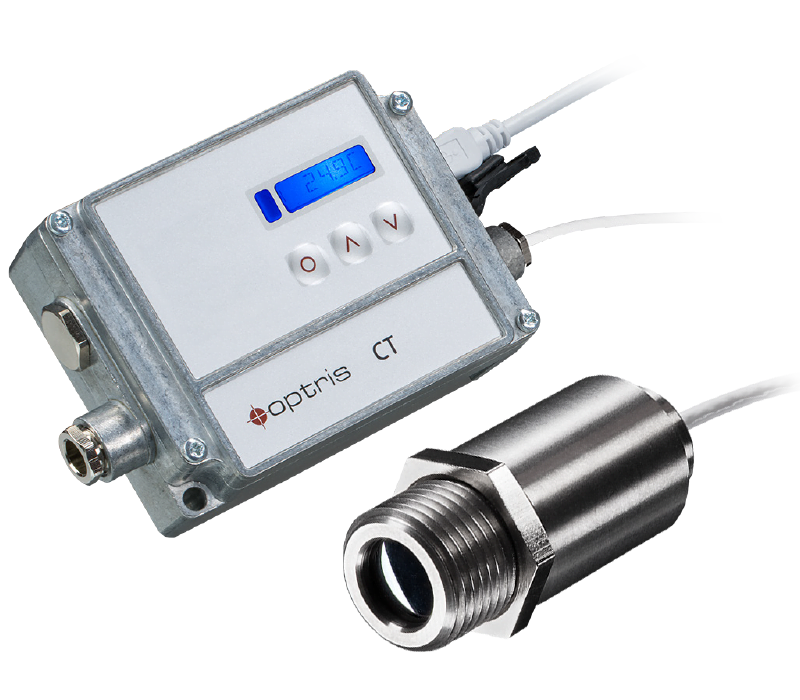

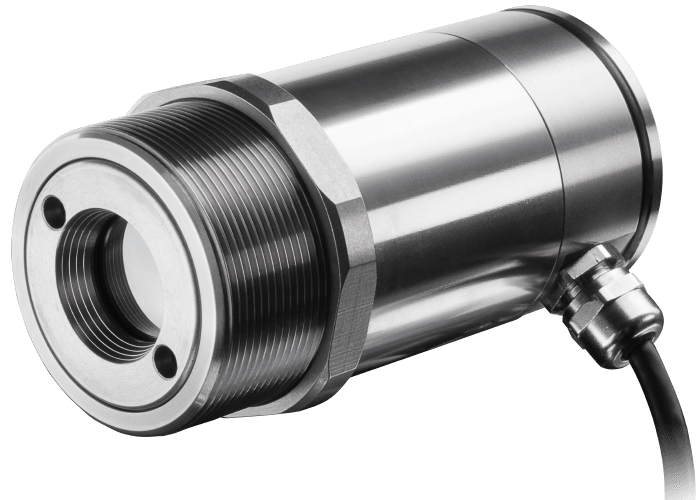

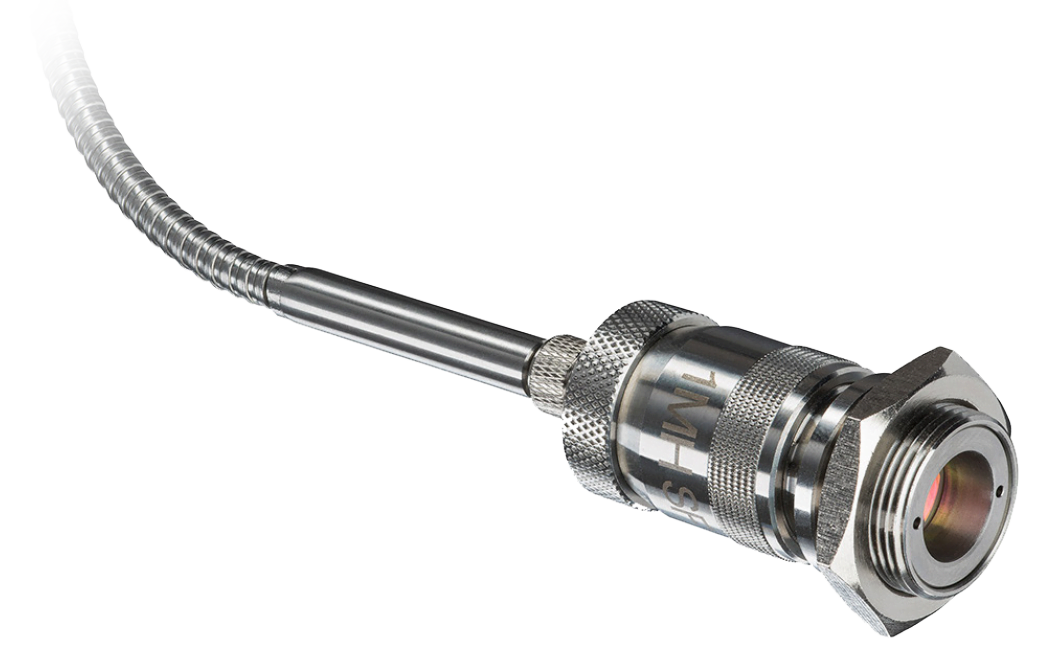

Infrared thermometers / pyrometers for

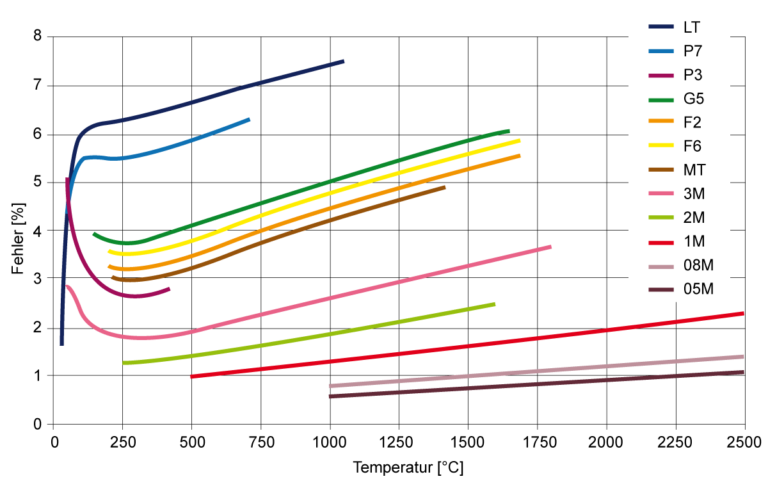

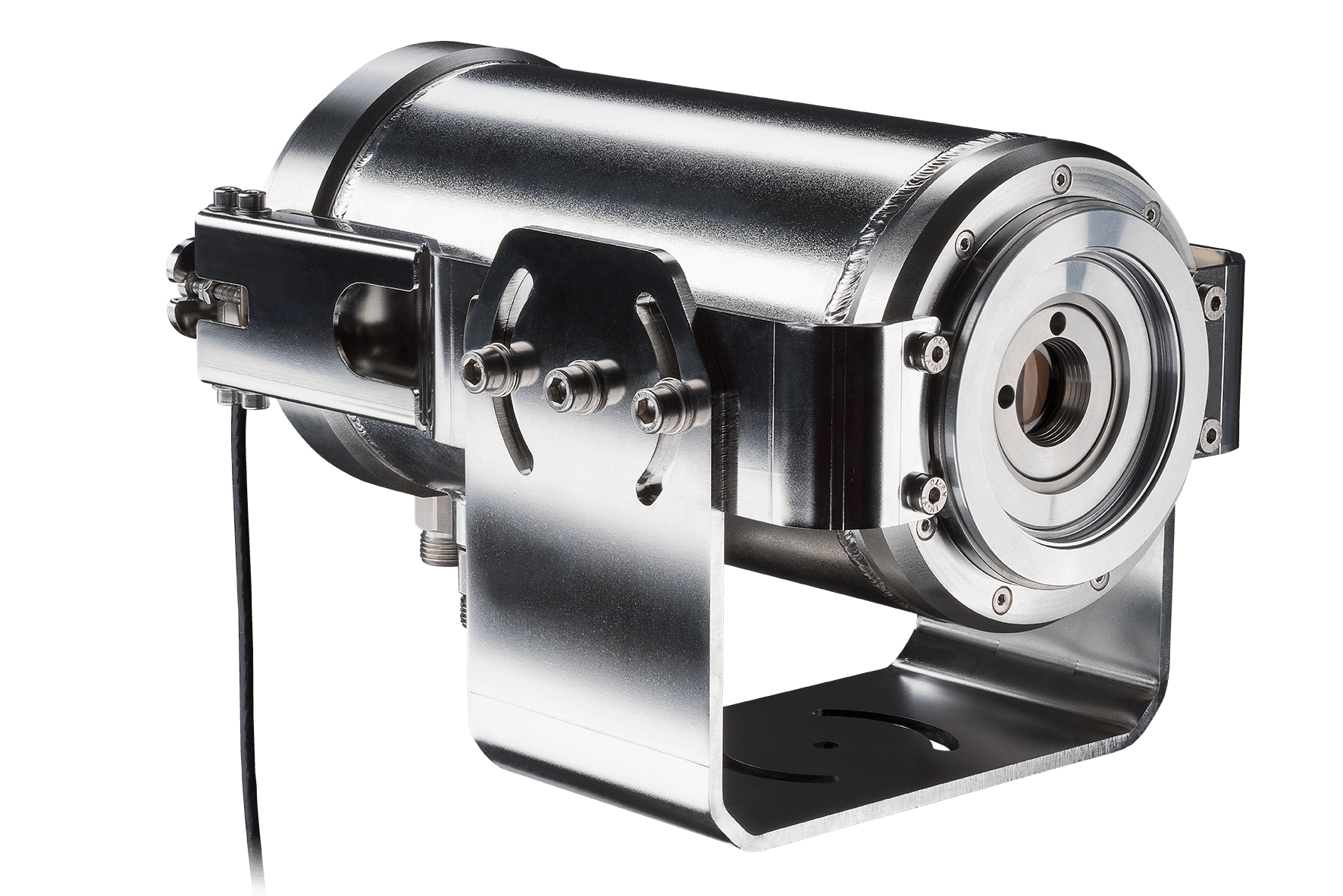

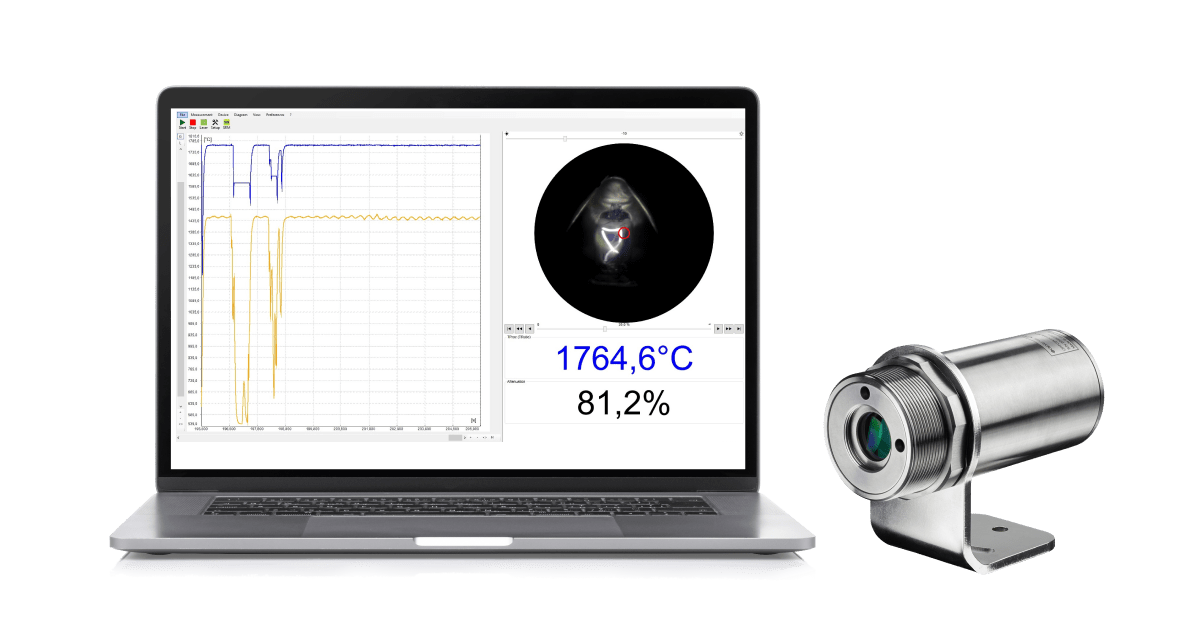

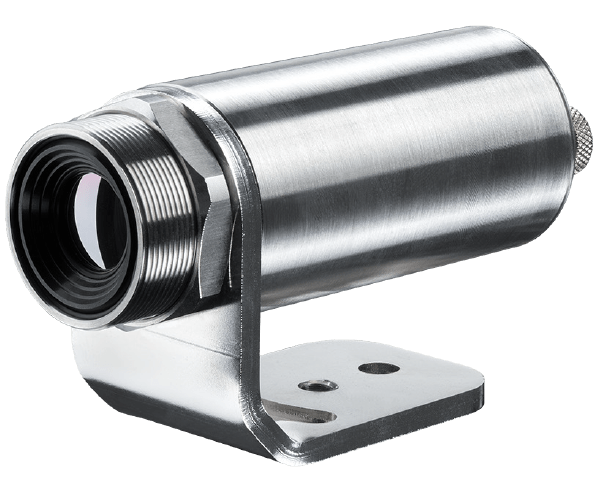

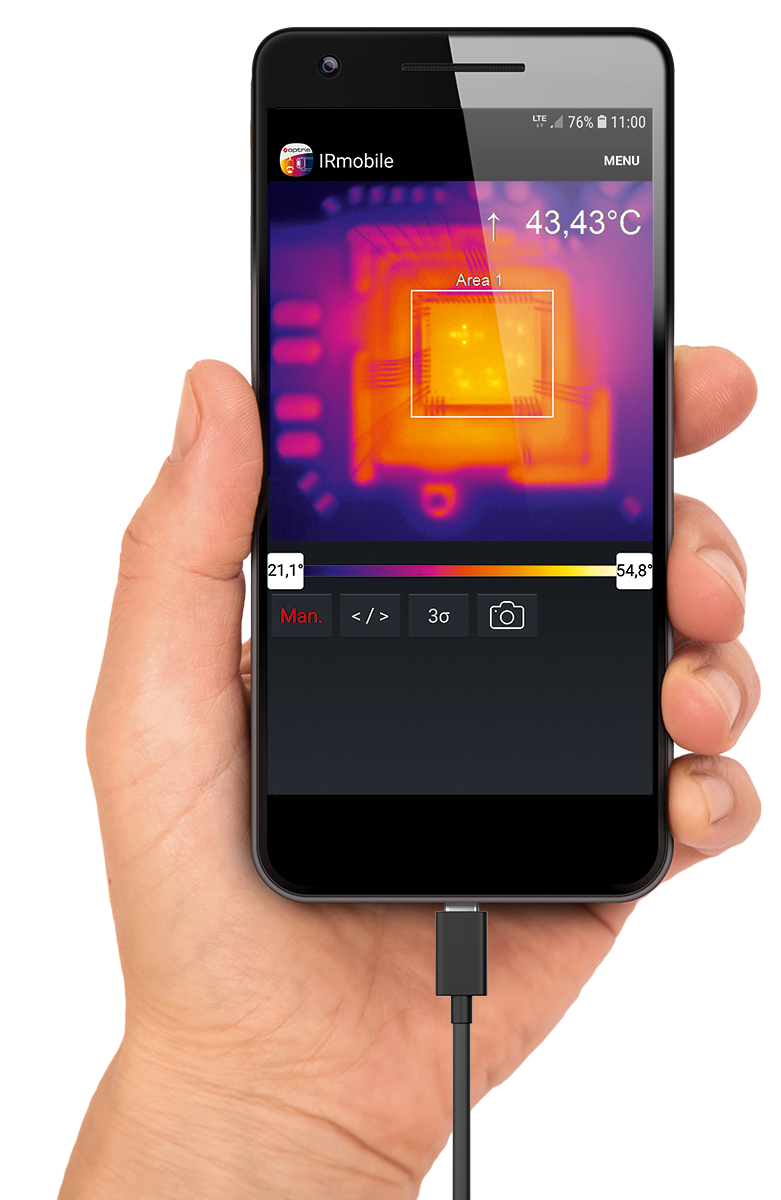

Optris infrared thermometers and pyrometers for spot measurements are particularly well suited for precise temperature monitoring of industrial manufacturing processes, research and development, and function checks of a diverse range of devices and systems.

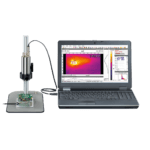

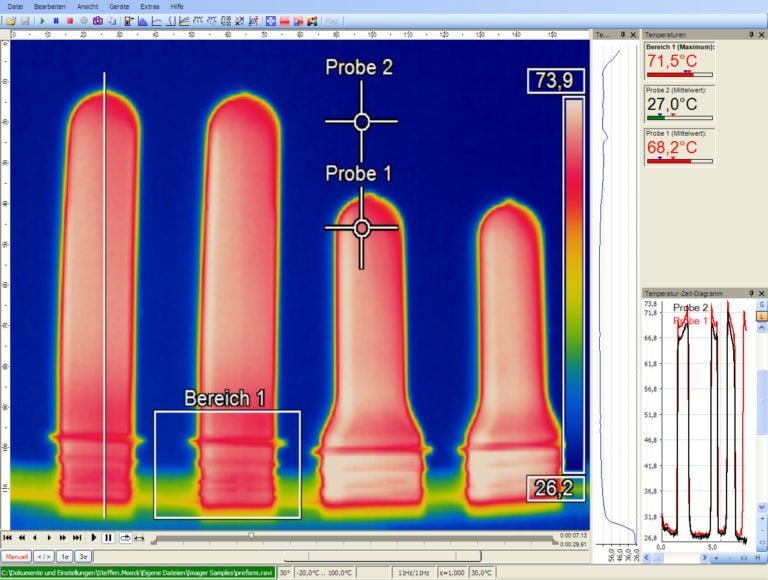

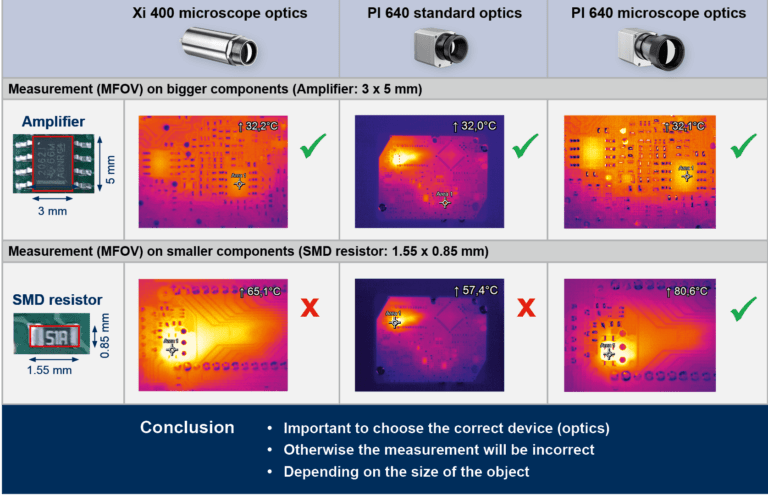

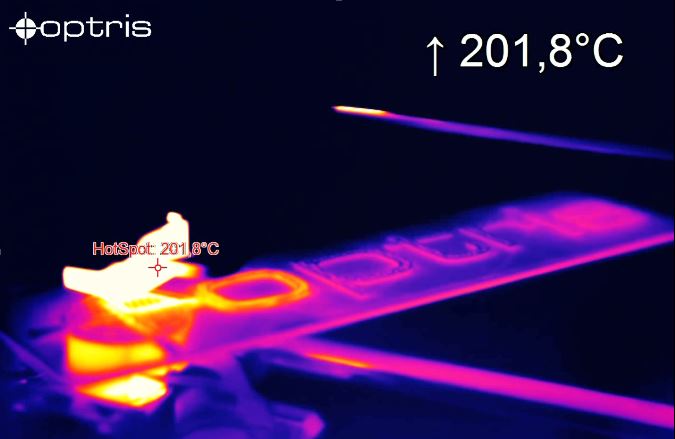

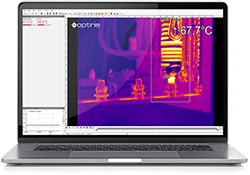

Infrared Cameras / Thermal imagers for

Optris’ infrared cameras are fully radiometric stationary thermographic systems with an excellent price-performance ratio. The thermal imaging cameras are connected to a PC via USB and they are immediately ready to be used. Temperature data is displayed through the license-free analysis software optris PIX Connect.

Upcoming Events

Event Type

All

Exhibition

IR Workshop

Webinar

Country

All

Austria

Belgium

Brazil

China

Czech Republic

Finland

Germany

India

Mexico

Poland

Saudi Arabia

Slovenia

Turkey

United Arab Emirates

United Kingdom

USA

Event Tag

All

2023

2023

2023

2023

2023

2023

2024

2024

2024

2024

2024

2024

Past and Future Events

All

Only Past Events

Only Future Events

Virtual Events

All

Virtual Events

Non Virtual Events